The Battle of AI Agent Protocols: Why ACP Stands Out?

We're witnessing a resurgence similar to the web services and microservices revolution of the early 2000s. Today, AI agents—autonomous, modular, intelligent—are rising, and communication standards are critical.

The current landscape features three major protocols:

MCP (Model Context Protocol) by Anthropic

ACP (Agent Communication Protocol) by IBM BeeAI

A2A (Agent‑to‑Agent Protocol) by Google

If you're looking to explore these, check out my previous blog post about these protocols:

Google Agent-to-Agent (A2A) Protocol Explained — with Real Working Examples

Model Context Protocol (MCP): Connecting Local LLMs to Various Data Sources

What is the ACP protocol?

ACP (Agent Communication Protocol) is an open, HTTP-based protocol developed by IBM BeeAI (under the Linux Foundation) to enable interoperable communication between autonomous AI agents.

Agents can:

Discover other agents via standard metadata.

Send and receive structured, multimodal messages (text, JSON, embeddings, etc.).

Stream partial results (e.g., “thinking…” or intermediate steps).

Work in stateless or stateful ways.

Interact via REST APIs using standardized message formats.

ACP aims to be language-agnostic, framework-neutral, and easy to adopt. No heavy SDKs or extra dependencies are required. You can wrap your agents with minimal effort using simple Python (or JS) wrappers.

In this article, we’ll explore ACP in depth:

Why another protocol is needed?

How it compares to MCP and A2A?

ACP agent architecture

Key features and benefits

And a quick-start Python example to try it yourself

Why do we need another protocol?

In a nutshell, protocol:

MCP excels at plugging a single LLM into external tools or resources.

A2A excels at agents communications and orchestrating in a multi-agents system.

But neither fully supports developer-friendly, low-boilerplate agent communication, especially with ease of deployment and discovery in an enterprise.

ACP fills this gap:

Minimal FastAPI integration—deploy agents quickly

Seamless native streaming and session support

Manifest-based discovery within teams or organizations

Enterprise-grade robustness via IBM BeeAI

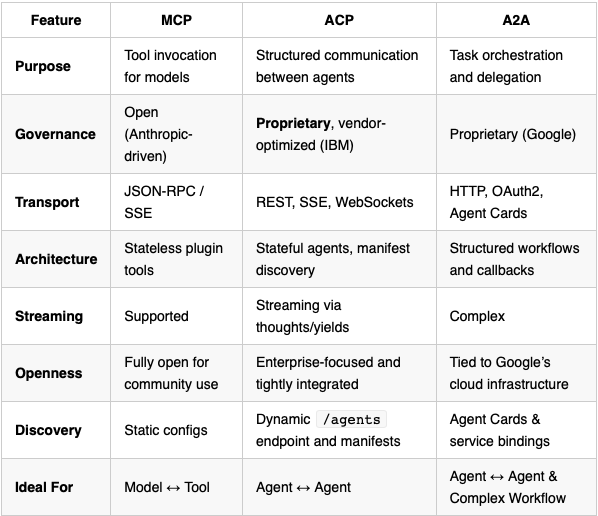

How ACP differs from MCP and Google A2A?

Based on insights from IBM documentation, and other comparative reviews, here’s a breakdown of the protocols:

To be honest, both A2A and ACP protocols are quite similar and can be used for the same purposes. However, the key advantage of ACP is its lightweight design and seamless integration with the BeeAI platform, which enables agent discovery, execution, sharing, and lifecycle management—much like Hugging Face does for models, but specifically tailored for autonomous agents.

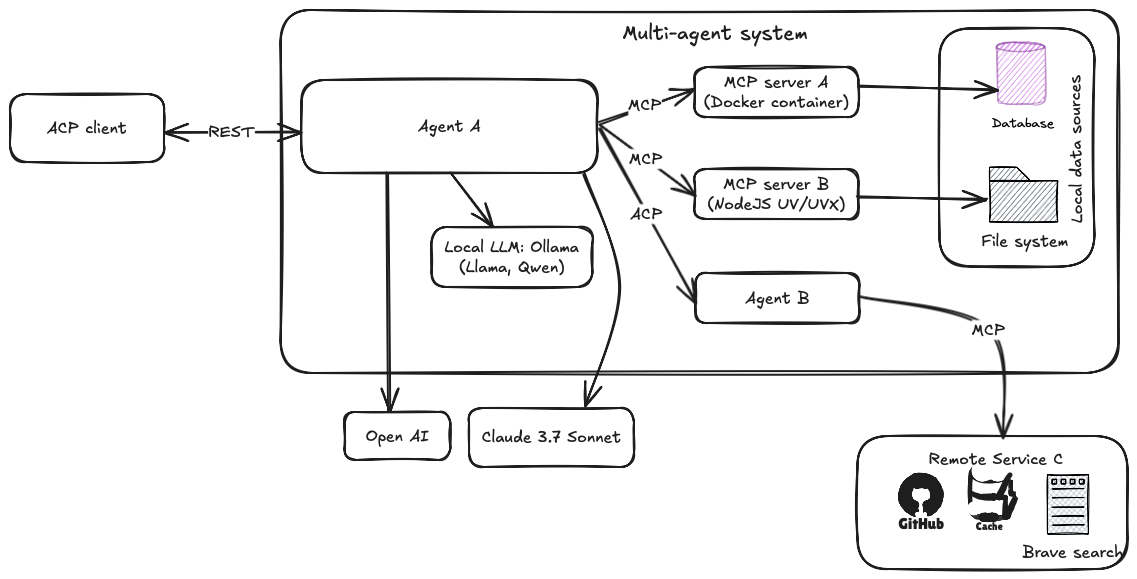

ACP Agent architecture

IBM’s ACP is designed to facilitate efficient, scalable, and standardized communication between AI agents within an enterprise ecosystem. It leverages RESTful APIs, streaming, and a manifest-based discovery system, often running on Python's FastAPI framework and integrated with IBM’s BeeAI platform.

Core Components

Agent Server

Hosts one or more AI agents as RESTful services.

Implements the ACP protocol endpoints to receive and respond to messages.

Supports streaming intermediate results (

thoughts), session management, and message batching.Typically built on FastAPI, enabling low-boilerplate, high-performance asynchronous communication.

Agent Client

Sends requests to remote agents over HTTP (or optionally via WebSocket/SSE).

Handles synchronous or asynchronous communication, invoking agents with input messages and receiving responses.

Manages sessions or conversation context for multi-turn interactions.

Message Model

Messages are structured payloads containing one or more

MessageParts, which can carry text, images, embeddings, or function call data.Supports rich, multimodal interaction, not just plain text.

Session Context

Enables agents to maintain conversational context over multiple message exchanges.

Sessions can store state, metadata, or intermediate reasoning steps.

Manifest and Discovery

Agents expose a manifest JSON (similar to

.well-known/ai-plugin.json) describing their capabilities, endpoints, and message schemas.The BeeAI platform or other registries consume these manifests to enable discovery and management of agents within teams or organizations.

BeeAI Platform (optional but powerful)

A management layer allowing enterprises to register, version, monitor, and share agents.

Provides dashboards, agent lifecycle management, logging, and observability.

Facilitates collaboration and scaling of multi-agent systems.

Now, let’s try a minimal example to create an ACP-compliant calculator agent and a client that calls it.

If you’re interested in AI using local LLMs, don’t miss our book Generative AI with local LLM for more in-depth information.

Code Example for quick start

For simplicity, we’ll create two Python scripts: one for a server agent that provides a calculator via a REST API, and another for an ACP client to invoke it.

The full source code is available on GitHub repository.

Step 1. Create & activate a Conda environment

As usual, I am going to use Conda, so do the following:

conda create -n acp-env python=3.11

conda activate acp-envStep 2. Install the ACP SDK

Yes, you need ACP SDK ;-) It's a minimal requirements.

pip install "acp-sdk[server,client]"Step 3. Define and run an ACP agent

Create a python file named "agent_server.py" any where in your local file system.

"""

agent_server.py

Run with: python agent_server.py

Then visit: http://127.0.0.1:8000/agents to see the agent manifest.

"""

import asyncio

import ast

from collections.abc import AsyncGenerator

from acp_sdk.server import Server, Context, RunYield, RunYieldResume

from acp_sdk.models import Message, MessagePart

server = Server() # Creates the FastAPI app + /agents registry

@server.agent(name="calculator", description="Evaluates basic arithmetic like '2+2*3'")

async def calculator( # Signature required by ACP :contentReference[oaicite:2]{index=2}

inputs: list[Message], # Chat‑style inputs

context: Context

) -> AsyncGenerator[RunYield, RunYieldResume]:

for msg in inputs:

expr = msg.parts[0].content

# stream a “thought” back to caller

yield {"thought": f"Computing {expr!r} …"}

# do the work (very naive, safe only for demo)

await asyncio.sleep(0.2) # pretend it’s expensive

try:

tree = ast.parse(expr, mode="eval")

if not all(isinstance(n, (ast.Expression, ast.BinOp, ast.UnaryOp,

ast.Num, ast.operator, ast.unaryop))

for n in ast.walk(tree)):

raise ValueError("unsupported input")

result = eval(compile(tree, filename="<expr>", mode="eval"))

except Exception as exc:

result = f" error: {exc}"

# send the final answer

yield Message(

parts=[MessagePart(content=str(result), content_type="text/plain")]

)

if __name__ == "__main__":

# runs uvicorn with sensible defaults (incl. OpenTelemetry hooks)

server.run(port=8000)

What happening in the above code?

@server.agent()registers the function as an ACP‑compliant endpoint.Each

yieldbecomes part of the ACP Run stream (first a “thought”, then the final message).The SDK auto‑generates an agent manifest that clients can discover at

/agents.

Step 4. Call the agent (sync)

Create an another python file named "client.py".

# client.py

# Run after agent_server.py is running.

import asyncio

from acp_sdk.client import Client

from acp_sdk.models import Message, MessagePart

BASE = "http://127.0.0.1:8000"

async def main() -> None:

async with Client(base_url=BASE) as client:

run = await client.run_sync(

agent="calculator",

input=[Message(parts=[MessagePart(content="2+2*3")])],

)

print("Answer:", run.output[0].parts[0].content) # -> 8

if __name__ == "__main__":

asyncio.run(main())

Step 5. Try it out

We need two separate terminal or command line console.

In terminal 1.

# Terminal 1

python agent_server.pyIt will run the Python script and start a server on port 8000. The output should be similar as show below:

python agent_server.py

INFO: Started server process [86567]

INFO: Waiting for application startup.

INFO: Application startup complete.

INFO: Uvicorn running on http://127.0.0.1:8000 (Press CTRL+C to quit)Browse the OpenAPI UI at http://127.0.0.1:8000/docs to inspect every ACP endpoint the SDK exposes (runs, sessions, events, etc.).

Now, in terminal 2, run the ACP client:

# Terminal 2

python client.pyIt will send the expression, wait for completion, and print the result—no streaming involved as shown below:

Answer: 8That's all.

Where to go next

Stateful sessions – keep context between multiple calls using

client.session()Streaming via Server‑Sent Events or WebSocket – switch transport by passing

stream=…options.BeeAI Platform – publish your agent manifest so others can discover & chain it.

Combine with MCP – the same server can expose tools via MCP SSE (

enable_mcp=True) if you also want tool calling.

In summary, though ACP and A2A appear similar, their developer experience sets them worlds apart:

ACP: Deploy intelligent agents with minimal code, enterprise governance, and BeeAI-powered discovery. Perfect for IBM-centric and hybrid setups.

A2A: Built for complex, cloud-scale agent orchestration—but involves more setup and boilerplate code.

Choosing the right protocol depends on your team's environment, deployment, and integration needs. And if you're heavily using IBM products or AI eco-system in production —ACP might be your low-code, enterprise-first winner.